Light Forms: AI Assisted Visual Music System

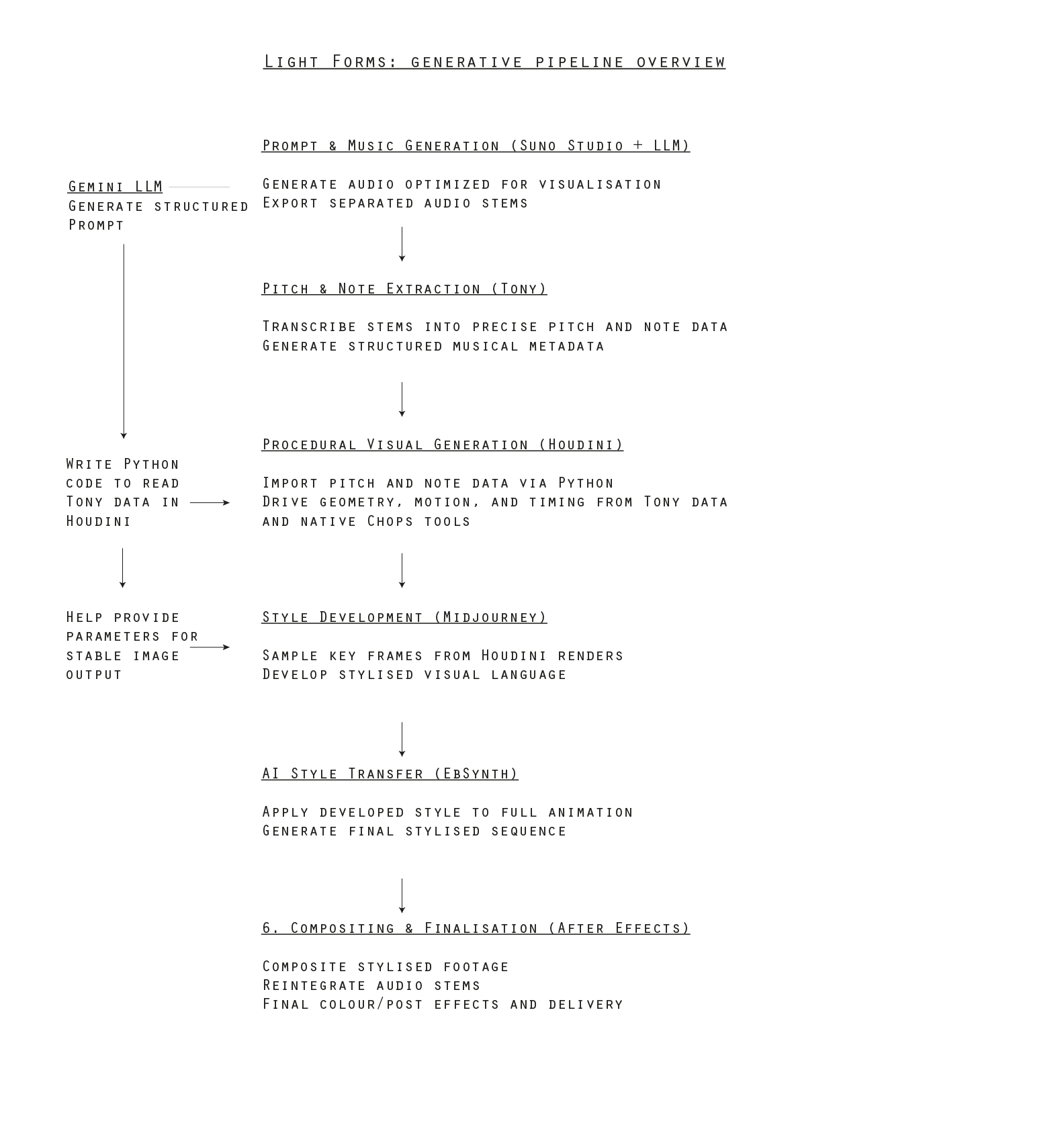

As an R&D project we set out to explore how AI tools could meaningfully extend our existing animation workflow, rather than replace it. We combined AI music synthesis, procedural animation and neural style transfer to build a fully music-driven piece, using sound as both the emotional and structural foundation.

The aim wasn’t simply to generate media with AI but to see where it could sit inside a pipeline we already understand well. In practice, that meant using AI in two distinct ways: first as a creative generator (music, visual style), and second as connective tissue and helping structure ideas, prototype logic and assist in writing the code that wires the system together.

The core of the animation, however, was still built and directed by hand. The musical data was translated into usable information but the interpretation of that data — how it drove geometry, timing, movement, density, rhythm — was designed deliberately inside Houdini. Most of the key decisions around motion, pacing, framing, and visual structure were shaped manually. The AI provided raw material and acceleration; the composition and animation language remained ours.

In that sense, the process became less about automation and more about amplification. AI expanded the palette, but it didn’t dictate the outcome. It introduced new layers — stylistic possibilities, generative variations, structural shortcuts — while the overall art direction and aesthetic judgement stayed firmly human.

We’ve tried to document the workflow clearly, not as a technical breakdown but as an insight into how these tools can be integrated into a contemporary animation pipeline. For us, the interesting space is in the overlap: procedural systems shaped by musical logic, guided by human design decisions, with AI operating somewhere between assistant and collaborator.

This piece is an experiment in that space — a test of how far we can push generative tools while still retaining authorship, intention and craft.

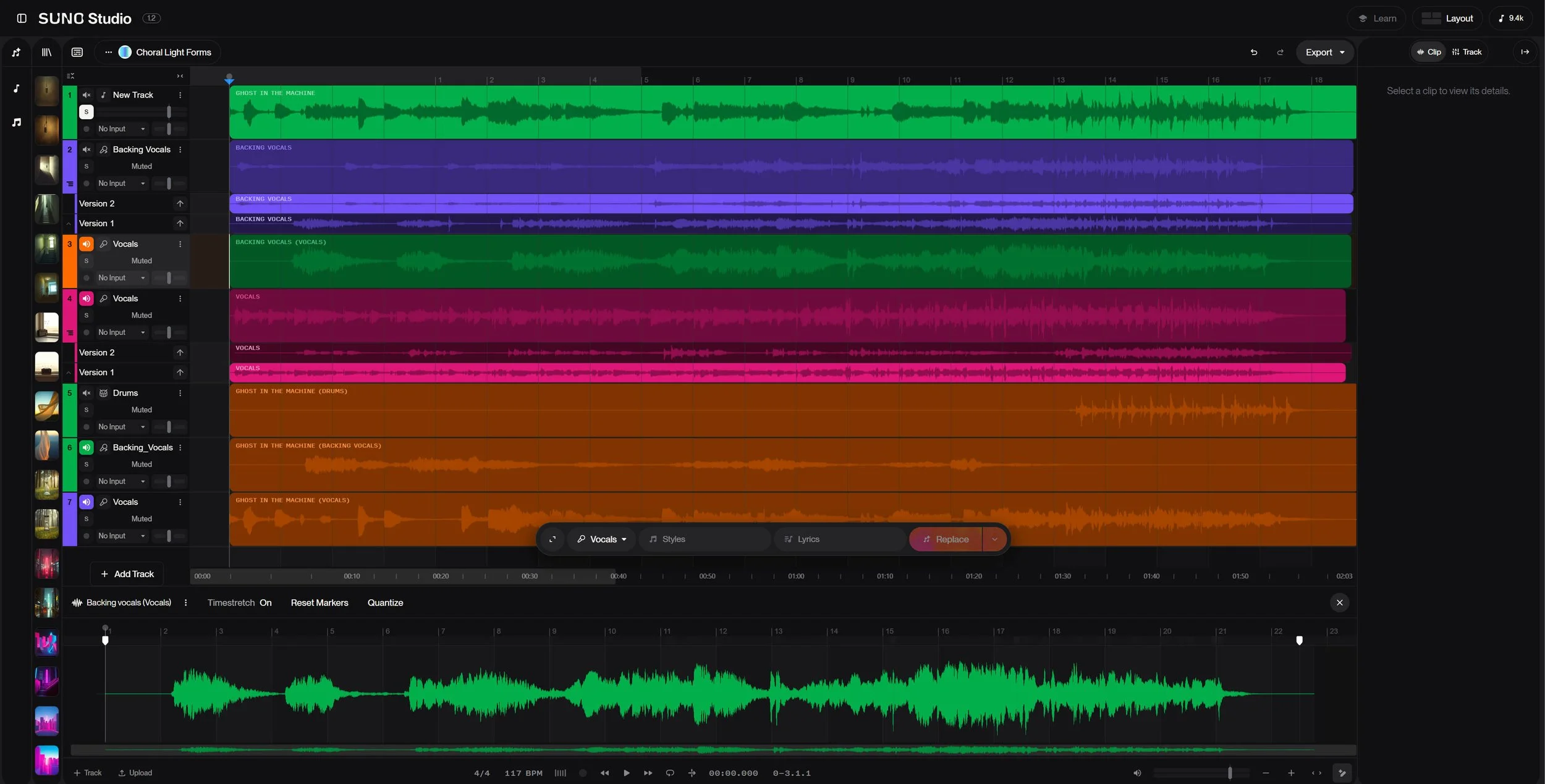

1. Music Generation & Stem Separation

We wrote the lyrics then used Suno Studio to generate the music. It is split into isolated stems for precise control. A little bit of finishing was done in Ableton.

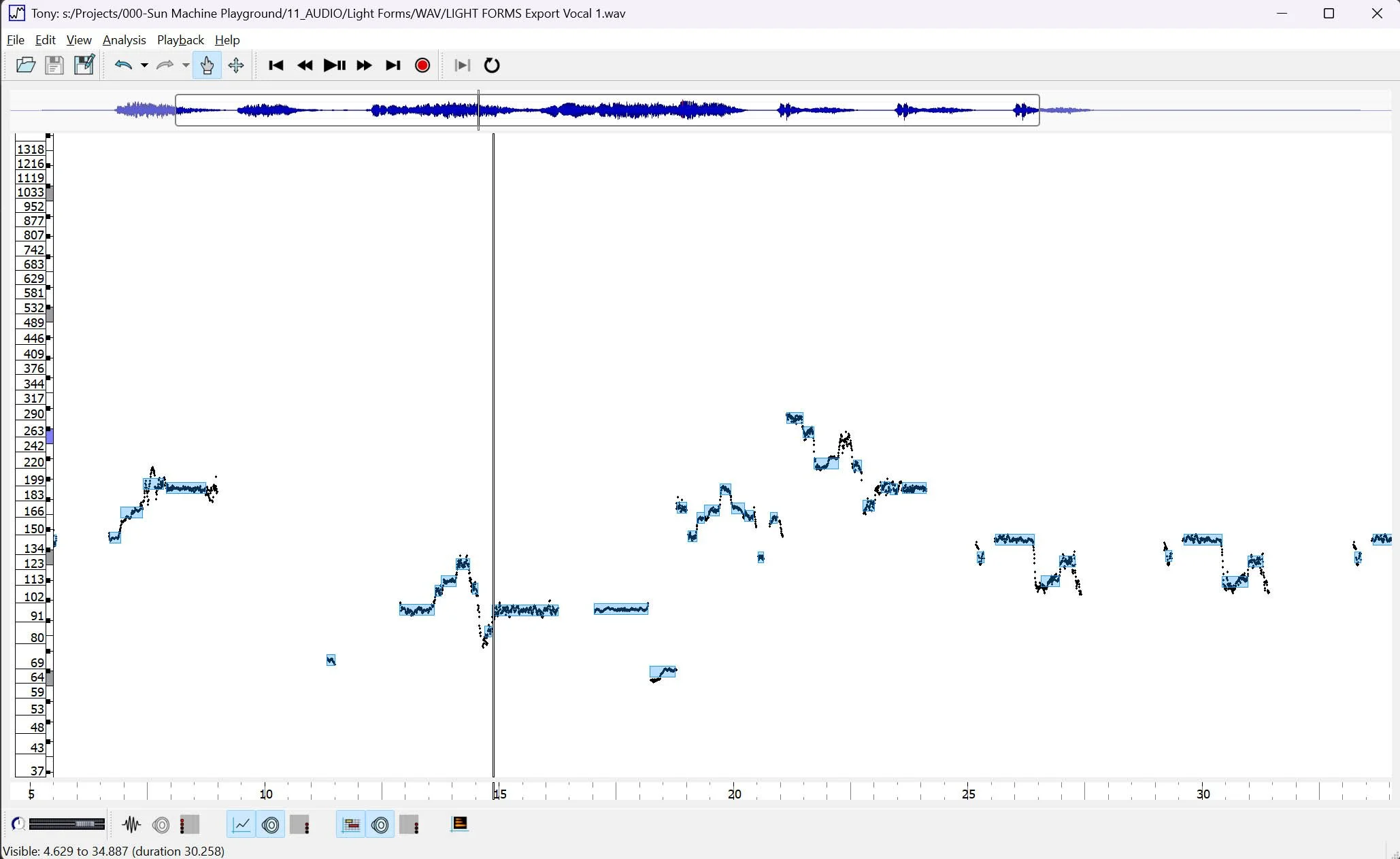

2. Pitch & Note Extraction

Tony is an open source software for transcribing pitch and assigning note values. The audio stems were fed into this then exported to data files.

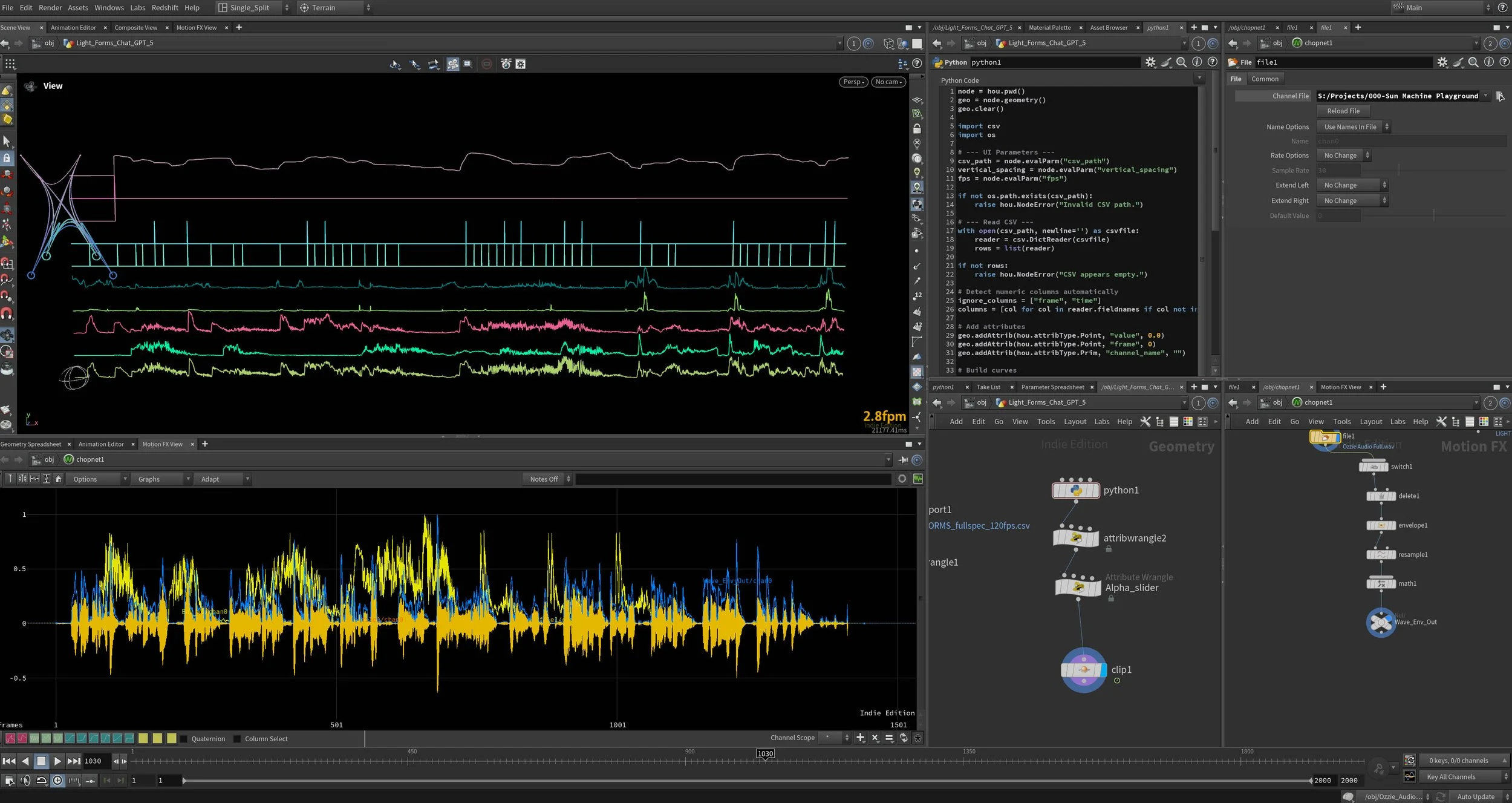

Gemini was used to write a script to import this data into Houdini

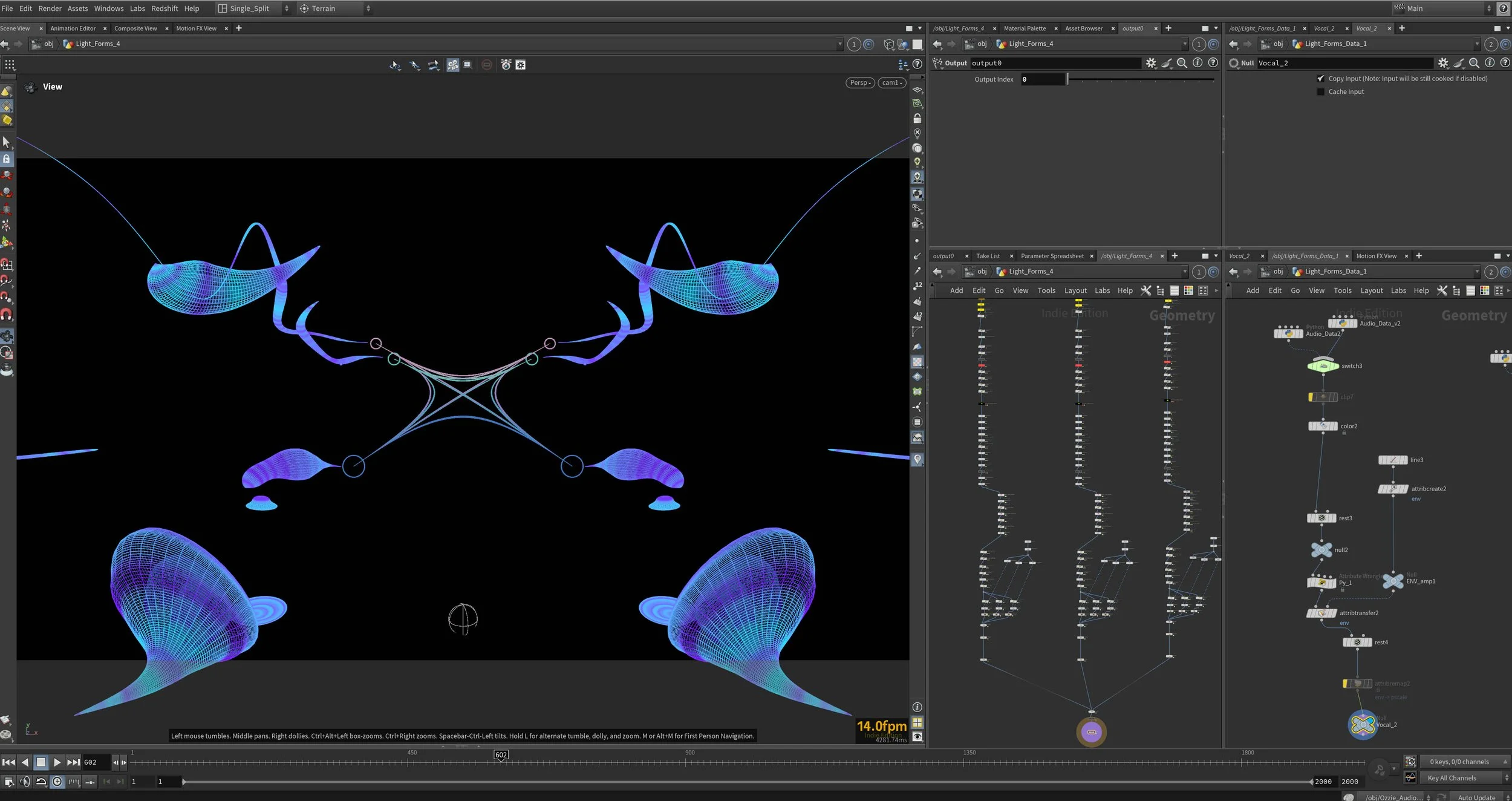

3. Audio preparation in Houdini

The Tony data was loaded into Houdini and some more basic audio analysis was done in CHOPS.

4. Procedural Animation and Render

The mapped audio data for each stem was then applied in Houdini to create the flowing animations and then rendered in Redshift in 4K.

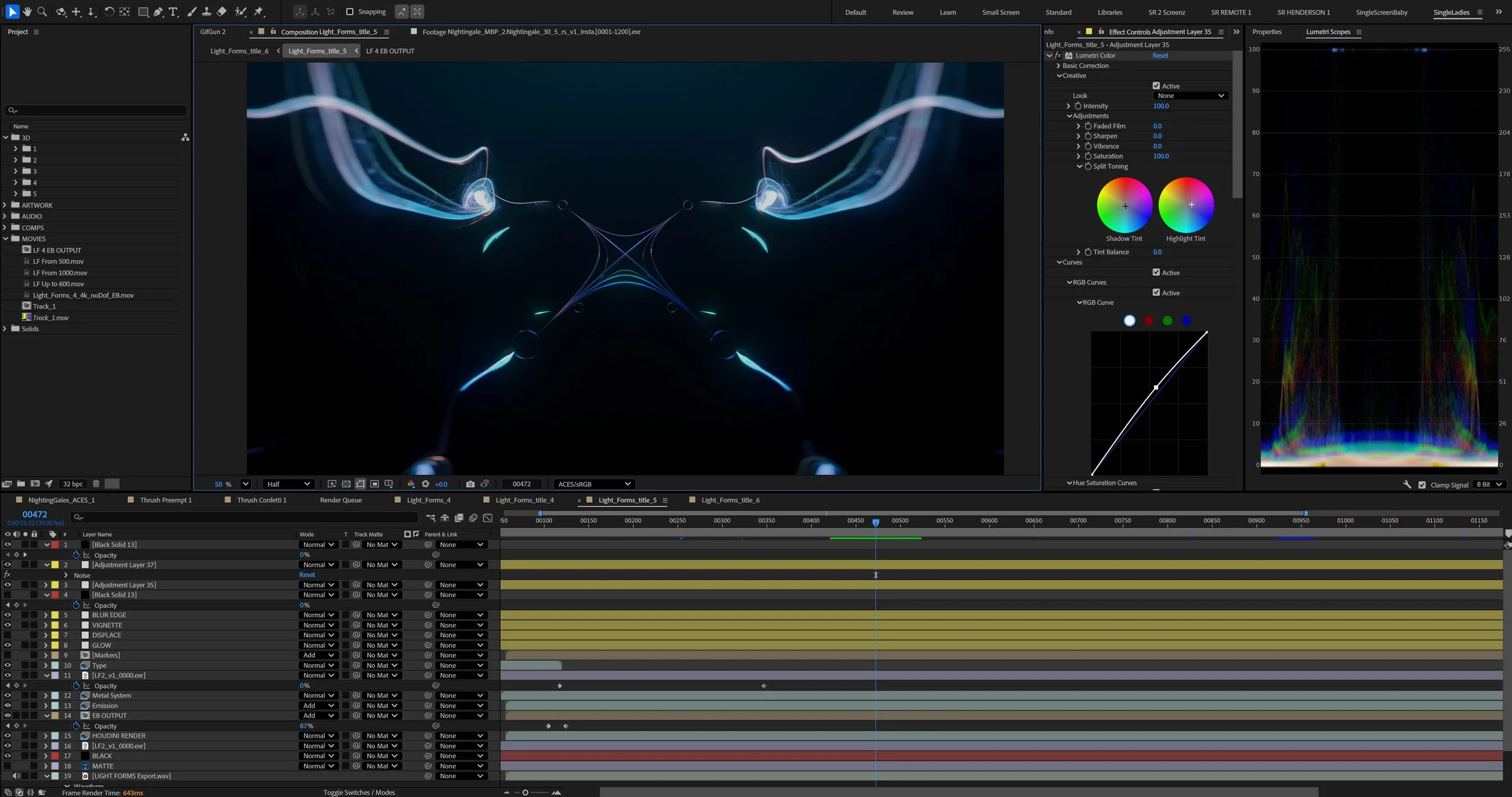

4. Style generation and transfer

Midjourney’s retexture tool was used to restyle key frames from the render whilst keeping the structure. Again Gemini was useful to help guide the prompts to keep them structurally and temporally similar.

The Midjourney keyframes were then inputted into to Ebsyth for the style transfer

5. Compositing

After Effects was used to composite the final renders, reapply the audio and add some post effects